With Phia’s AI, the new luxury is knowing what’s worth buying

AI has transformed how we shop—predicting trends, powering virtual try-ons and streamlining fashion logistics. Yet some of the biggest pain points remain: endless scrolling, too many tabs and never knowing if you’ve overpaid. That’s the gap Phia aims to close.

Co-founded by Phoebe Gates, daughter of Bill Gates, and climate activist Sophia Kianni, Phia was born in a Stanford dorm room and launched in April 2025. The app, available on mobile and as a browser extension, compares prices across over 40,000 retailers and thrift platforms to show what an item really costs. Its hallmark feature, “Should I Buy This?”, instantly flags whether something is overpriced, fair or a genuine deal.

The mission is simple: make shopping smarter, fairer and more sustainable. In just five months, Phia has attracted more than 500,000 users, indexed billions of products and built over 5,000 brand partnerships. It also secured a US$8 million seed round led by Kleiner Perkins, joined by Hailey Bieber, Kris Jenner, Sara Blakely and Sheryl Sandberg—investors who bridge tech, retail and culture. “Phia is redefining how people make purchase decisions,” said Annie Case, partner at Kleiner Perkins.

Phia’s AI engine scans real-time data from more than 250 million products across its network, including Vestiaire Collective, StockX, eBay and Poshmark. Beyond comparing prices, the app helps users discover cheaper or more sustainable options by displaying pre-owned items next to new ones—helping users see the full spectrum of choices before they buy. It also evaluates how different brands perform over time, analysing how well their products hold resale value. This insight helps shoppers judge whether a purchase is likely to last in value or if opting for a second-hand version makes more sense. The result is a platform that naturally encourages circular shopping—keeping items in use longer through resale, repair or recycling—and resonates strongly with Gen Z and millennial values of sustainability and mindful spending.

By encouraging transparency and smarter choices, Phia signals a broader shift in consumer technology: one where AI doesn’t just automate decisions but empowers users to understand them. Instead of merely digitizing the act of shopping, Phia embodies data-driven accountability—using intelligent search to help consumers make informed and ethical choices in markets long clouded by complexity. Retail analysts believe this level of visibility could push brands to maintain accurate and competitive pricing. Skeptics, however, argue that Phia must evolve beyond comparison to create emotional connection and loyalty. Still, one fact stands out: algorithms are no longer just recommending what we buy—they’re rewriting how we decide.

With new funding powering GPU expansion and advanced personalization tools, Phia’s next step is to build a true AI shopping agent—one that helps people buy better, live smarter and rethink what it means to shop with purpose.

Where Hollywood magic meets AI intelligence — Hong Kong becomes the new stage for virtual humans

In an era where pixels and intelligence converge, few companies bridge art and science as seamlessly as Digital Domain. Founded three decades ago by visionary filmmaker James Cameron, the company built its name through cinematic wizardry—bringing to life the impossible worlds of Titanic, The Curious Case of Benjamin Button and the Marvel universe. But today, its focus has evolved far beyond Hollywood: Digital Domain is reimagining the future of AI-driven virtual humans—and it’s doing so from right here in Hong Kong.

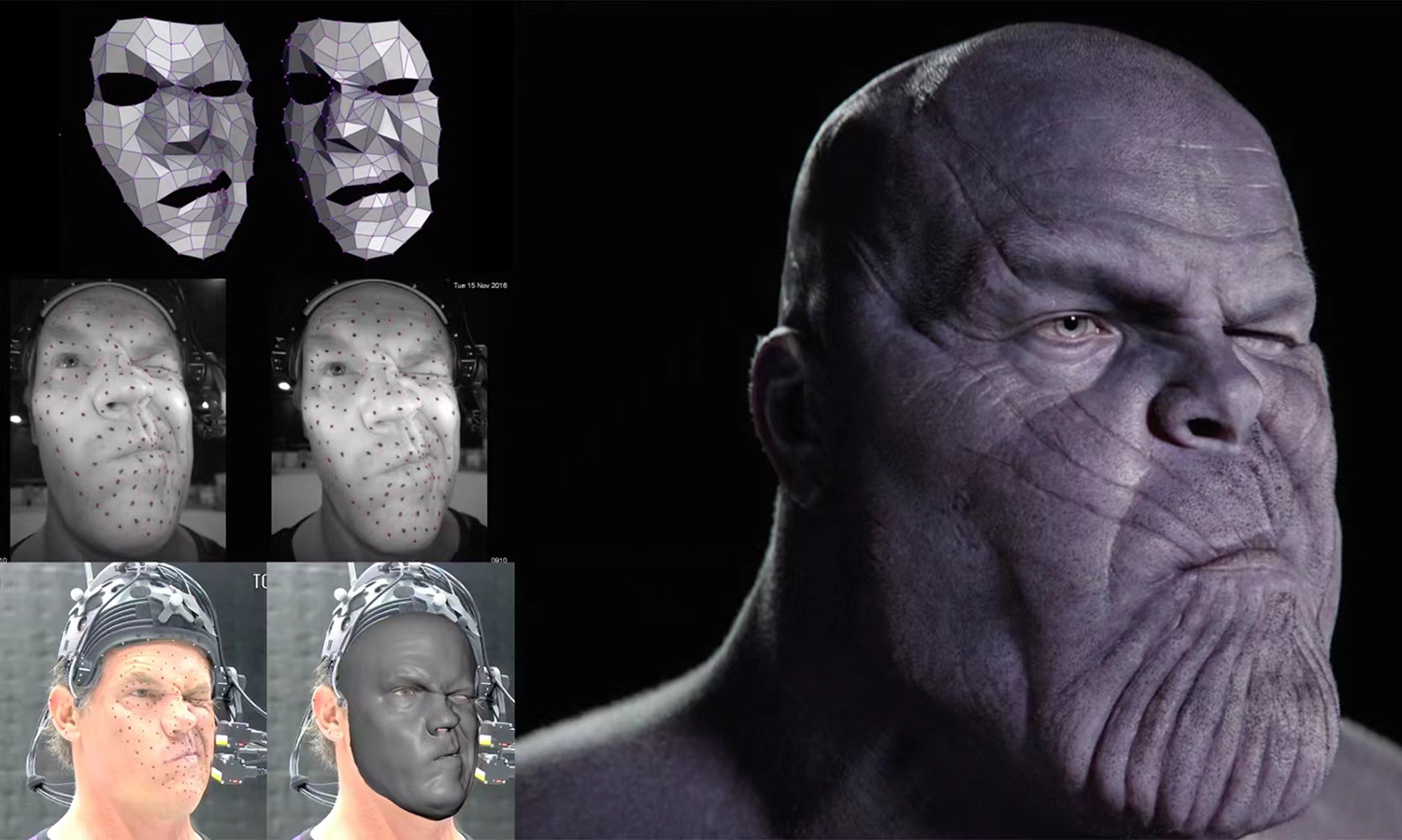

.jpg)

“AI and visual technology are merging faster than anyone imagined,” says William Wong, Chairman and CEO of Digital Domain. “For us, the question is not whether AI will reshape entertainment—it already has. The question is how we can extend that power into everyday life.”

Though globally recognized for its work on blockbuster films and AAA games, Digital Domain’s story is also deeply connected to Asia. A Hong Kong–listed company, it operates a network of production and research centers across North America, China and India. In 2024, it announced a major milestone—setting up a new R&D hub at Hong Kong Science Park focused on advancing artificial intelligence and virtual human technologies. “Our roots are in visual storytelling, but AI is unlocking a new frontier,” Wong says. “Hong Kong has been very proactive in promoting innovation and research, and with the right partnerships, we see real potential to make this a global R&D base.”

Building on that commitment, the company plans to invest about HK$200 million over five years, assembling a team of more than 40 professional talents specializing in computer vision, machine learning and digital production. For now, the team is still growing and has room to expand. “Talent is everything,” says Wong. “We want to grow local expertise while bringing in global experience to accelerate the learning curve.”

Digital Domain’s latest chapter revolves around one of AI’s most fascinating frontiers: the creation of virtual humans.

These are hyperrealistic, AI-powered virtual humans capable of speaking, moving and responding in real time. Using the advanced motion-capture and rendering techniques that transformed Hollywood visual effects, the company now builds digital personalities that appear on screens and in physical environments—serving in media, education, retail and even public services.

One of its most visible projects is “Aida”, the AI-powered presenter who delivers nightly weather reports on the Radio Television Hong Kong (RTHK). Another initiative, now in testing, will soon feature AI-powered concierges greeting travelers at airports, able to communicate in multiple languages and provide real-time personalized services. Similar collaborations are under way in healthcare, customer service and education.

“What’s exciting,” says Wong, “is that our technologies amplify human capability, helping to deliver better experiences, greater efficiency and higher capacity. AI-powered virtual humans can interact naturally, emotionally and in any language. They can help scale creativity and service, not replace it.”

To make that possible, Digital Domain has designed its system for compatibility and flexibility. It can connect to major AI models—from OpenAI and Google to Baidu—and operate across cloud platforms like AWS, Alibaba Cloud and Microsoft Azure. “It’s about openness,” says Wong. “Our clients can choose the AI brain that best fits their business.”

Establishing a permanent R&D base in Hong Kong marks a turning point for the company—and, in a broader sense, for the city’s technology ecosystem. With the support of the Office for Attracting Strategic Enterprises (OASES) in Hong Kong, Digital Domain hopes to make the city a creative hub where AI meets visual arts. “Hong Kong is the perfect meeting point,” Wong says. “It combines international exposure with a growing innovation ecosystem. We want to make it a hub for creative AI.”

As part of this effort, the company is also collaborating with universities such as the University of Hong Kong, City University of Hong Kong and Hong Kong Baptist University to co-develop new AI solutions and nurture the next generation of engineers. “The goal,” Wong notes, “is not just R&D for the sake of research—but R&D that translates into real-world impact.”

The collaboration with OASES underscores how both the company and the city share a vision for innovation-led growth. As Peter Yan King-shun, Director-General of OASES, notes, the initiative reflects Hong Kong’s growing strength as a global innovation and technology hub. “OASES was set up to attract high-potential enterprises from around the world across key sectors such as AI, data science, and cultural and creative technology,” he says. “Digital Domain’s new R&D center is a strong example of how Hong Kong can combine world-class talent, technology and creativity to drive innovation and global competitiveness.”

Digital Domain’s story mirrors the evolution of Hong Kong’s own innovation landscape—where creativity, technology and global ambition converge. From the big screen to the next generation of intelligent avatars, the company continues to prove that imagination is not bound by borders, but powered by the courage to reinvent what’s possible.

A closer look at how reading, conversation, and AI are being combined

In the past, “educational toys” usually meant flashcards, prerecorded stories or apps that asked children to tap a screen. ChooChoo takes a different approach. It is designed not to instruct children at them, but to talk with them.

ChooChoo is an AI-powered interactive reading companion built for children aged three to six. Instead of playing stories passively, it engages kids in conversation while reading. It asks questions, reacts to answers, introduces new words in context and adjusts the story flow based on how the child responds. The goal is not entertainment alone, but language development through dialogue.

That idea is rooted in research, not novelty. ChooChoo is inspired by dialogic reading methods from Yale’s early childhood language development work, which show that children learn language faster when stories become two-way conversations rather than one-way narration. Used consistently, this approach has been shown to improve vocabulary, comprehension and confidence within weeks.

The project was created by Dr. Diana Zhu, who holds a PhD from Yale and focused her work on how children acquire language. Her aim with ChooChoo was to turn academic insight into something practical and warm enough to live in a child’s room. The result is a device that listens, responds and adapts instead of simply playing content on command.

What makes this possible is not just AI, but where that AI runs.

Unlike many smart toys that rely heavily on the cloud, ChooChoo is built on RiseLink’s edge AI platform. That means much of the intelligence happens directly on the device itself rather than being sent back and forth to remote servers. This design choice has three major implications.

First, it reduces delay. Conversations feel natural because the toy can respond almost instantly. Second, it lowers power consumption, allowing the device to stay “always on” without draining the battery quickly. Third, it improves privacy. Sensitive interactions are processed locally instead of being continuously streamed online.

RiseLink’s hardware, including its ultra-low-power AI system-on-chip designs, is already used at large scale in consumer electronics. The company ships hundreds of millions of connected chips every year and works with global brands like LG, Samsung, Midea and Hisense. In ChooChoo’s case, that same industrial-grade reliability is being applied to a child’s learning environment.

The result is a toy that behaves less like a gadget and more like a conversational partner. It engages children in back-and-forth discussion during stories, introduces new vocabulary in natural context, pays attention to comprehension and emotional language and adjusts its pace and tone based on each child’s interests and progress. Parents can also view progress through an optional app that shows what words their child has learned and how the system is adjusting over time.

What matters here is not that ChooChoo is “smart,” but that it reflects a shift in how technology enters early education. Instead of replacing teachers or parents, tools like this are designed to support human interaction by modeling it. The emphasis is on listening, responding and encouraging curiosity rather than testing or drilling.

That same philosophy is starting to shape the future of companion robots more broadly. As edge AI improves and hardware becomes smaller and more energy efficient, we are likely to see more devices that live alongside people instead of in front of them. Not just toys, but helpers, tutors and assistants that operate quietly in the background, responding when needed and staying out of the way when not.

In that sense, ChooChoo is less about novelty and more about direction. It shows what happens when AI is designed not for spectacle, but for presence. Not for control, but for conversation.

If companion robots become part of daily life in the coming years, their success may depend less on how powerful they are and more on how well they understand when to speak, when to listen and how to grow with the people who use them.

How ECOPEACE uses autonomous robots and data to monitor and maintain urban water bodies.

South Korea–based water technology company ECOPEACE is working on a practical challenge many cities face today: keeping urban water bodies clean as pollution and algae growth become more frequent. Rather than relying on periodic cleanup drives, the company focuses on systems that can monitor and manage water conditions on an ongoing basis.

At the core of ECOPEACE’s work are autonomous water-cleanup robots known as ECOBOT. These machines operate directly on lakes, reservoirs and rivers, removing algae and surface waste while also collecting information about water quality. The idea is to combine cleaning with constant observation so changes in water conditions do not go unnoticed.

Alongside the robots, ECOPEACE uses a filtration and treatment system designed to process polluted water continuously. This system filters out contaminants using fine metal filters and treats the water using electrical processes. It also cleans itself automatically, which allows it to run for long periods without frequent manual maintenance.

The role of AI in this setup is largely about decision-making rather than direct control. Sensors placed across the water body collect data such as pollution levels and water quality indicators. The software then analyses this data to spot early signs of issues like algae growth. Based on these patterns, the system adjusts how the robots and filtration units operate, such as changing treatment intensity or water flow. In simple terms, the technology helps the system respond sooner instead of waiting for visible problems to appear.

ECOPEACE has already deployed these systems across several reservoirs, rivers and urban waterways in South Korea. Those projects have helped refine how the robots, sensors and software work together in real environments rather than controlled test sites.

Building on that experience, the company has begun expanding beyond Korea. It is currently running pilot and proof-of-concept projects in Singapore and the United Arab Emirates. These deployments are testing how the technology performs in dense urban settings where waterways are closely linked to public health, infrastructure and daily city life.

Both regions have invested heavily in smart city initiatives and water management, making them suitable test beds for automated monitoring and cleanup systems. The pilots focus on algae control, surface cleaning and real-time tracking of water quality rather than large-scale rollout.

As cities continue to grow and climate-related pressures on water systems increase, managing waterways is becoming less about occasional intervention and more about continuous oversight. ECOPEACE’s approach reflects that shift by using automation and data to address problems early and reduce the need for reactive cleanup later.

December 30, 2025

How Korea is trying to take control of its AI future.

SK Telecom, South Korea’s largest mobile operator, has unveiled A.X K1, a hyperscale artificial intelligence model with 519 billion parameters. The model sits at the center of a government-backed effort to build advanced AI systems and domestic AI infrastructure within Korea. This comes at a time when companies in the United States and China largely dominate the development of the most powerful large language models.

Rather than framing A.X K1 as just another large language model, SK Telecom is positioning it as part of a broader push to build sovereign AI capacity from the ground up. The model is being developed as part of the Korean government’s Sovereign AI Foundation Model project, which aims to ensure that core AI systems are built, trained and operated within the country. In simple terms, the initiative focuses on reducing reliance on foreign AI platforms and cloud-based AI infrastructure, while giving Korea more control over how artificial intelligence is developed and deployed at scale.

One of the gaps this approach is trying to address is how AI knowledge flows across a national ecosystem. Today, the most powerful AI foundation models are often closed, expensive and concentrated within a small number of global technology companies. A.X K1 is designed to function as a “teacher model,” meaning it can transfer its capabilities to smaller, more specialized AI systems. This allows developers, enterprises and public institutions to build tailored AI tools without starting from scratch or depending entirely on overseas AI providers.

That distinction matters because most real-world applications of artificial intelligence do not require massive models operating independently. They require focused, reliable AI systems designed for specific use cases such as customer service, enterprise search, manufacturing automation or mobility. By anchoring those systems to a large, domestically developed foundation model, SK Telecom and its partners are aiming to create a more resilient and self-sustaining AI ecosystem.

The effort also reflects a shift in how AI is being positioned for everyday use. SK Telecom plans to connect A.X K1 to services that already reach millions of users, including its AI assistant platform A., which operates across phone calls, messaging, web services and mobile applications. The broader goal is to make advanced AI feel less like a distant research asset and more like an embedded digital infrastructure that supports daily interactions.

This approach extends beyond consumer-facing services. Members of the SKT consortium are testing how the hyperscale AI model can support industrial and enterprise applications, including manufacturing systems, game development, robotics and autonomous technologies. The underlying logic is that national competitiveness in artificial intelligence now depends not only on model performance, but on whether those models can be deployed, adapted and validated in real-world environments.

There is also a hardware dimension to the project. Operating an AI model at the 500-billion-parameter scale places heavy demands on computing infrastructure, particularly memory performance and communication between processors. A.X K1 is being used to test and validate Korea’s semiconductor and AI chip capabilities under real workloads, linking large-scale AI software development directly to domestic semiconductor innovation.

The initiative brings together technology companies, universities and research institutions, including Krafton, KAIST and Seoul National University. Each contributes specialized expertise ranging from data validation and multimodal AI research to system scalability. More than 20 institutions have already expressed interest in testing and deploying the model, reinforcing the idea that A.X K1 is being treated as shared national AI infrastructure rather than a closed commercial product.

Looking ahead, SK Telecom plans to release A.X K1 as open-source AI software, alongside APIs and portions of the training data. If fully implemented, the move could lower barriers for developers, startups and researchers across Korea’s AI ecosystem, enabling them to build on top of a large-scale foundation model without incurring the cost and complexity of developing one independently.

The hidden cost of scaling AI: infrastructure, energy, and the push for liquid cooling.

As artificial intelligence models grow larger and more demanding, the quiet pressure point isn’t the algorithms themselves—it’s the AI infrastructure that has to run them. Training and deploying modern AI models now requires enormous amounts of computing power, which creates a different kind of challenge: heat, energy use and space inside data centers. This is the context in which Supermicro and NVIDIA’s collaboration on AI infrastructure begins to matter.

Supermicro designs and builds large-scale computing systems for data centers. It has now expanded its support for NVIDIA’s Blackwell generation of AI chips with new liquid-cooled server platforms built around the NVIDIA HGX B300. The announcement isn’t just about faster hardware. It reflects a broader effort to rethink how AI data center infrastructure is built as facilities strain under rising power and cooling demands.

At a basic level, the systems are designed to pack more AI chips into less space while using less energy to keep them running. Instead of relying mainly on air cooling—fans, chillers and large amounts of electricity, these liquid-cooled AI servers circulate liquid directly across critical components. That approach removes heat more efficiently, allowing servers to run denser AI workloads without overheating or wasting energy.

Why does that matter outside a data center? Because AI doesn’t scale in isolation. As models become more complex, the cost of running them rises quickly, not just in hardware budgets, but in electricity use, water consumption and physical footprint. Traditional air-cooling methods are increasingly becoming a bottleneck, limiting how far AI systems can grow before energy and infrastructure costs spiral.

This is where the Supermicro–NVIDIA partnership fits in. NVIDIA supplies the computing engines—the Blackwell-based GPUs designed to handle massive AI workloads. Supermicro focuses on how those chips are deployed in the real world: how many GPUs can fit in a rack, how they are cooled, how quickly systems can be assembled and how reliably they can operate at scale in modern data centers. Together, the goal is to make high-density AI computing more practical, not just more powerful.

The new liquid-cooled designs are aimed at hyperscale data centers and so-called AI factories—facilities built specifically to train and run large AI models continuously. By increasing GPU density per rack and removing most of the heat through liquid cooling, these systems aim to ease a growing tension in the AI boom: the need for more computers without an equally dramatic rise in energy waste.

Just as important is speed. Large organizations don’t want to spend months stitching together custom AI infrastructure. Supermicro’s approach packages compute, networking and cooling into pre-validated data center building blocks that can be deployed faster. In a world where AI capabilities are advancing rapidly, time to deployment can matter as much as raw performance.

Stepping back, this development says less about one product launch and more about a shift in priorities across the AI industry. The next phase of AI growth isn’t only about smarter models—it’s about whether the physical infrastructure powering AI can scale responsibly. Efficiency, power use and sustainability are becoming as critical as speed.

Humanoids are moving from research labs into real industries — and capital is finally catching up.

Humanoid robots are shifting from sci-fi speculation to engineering reality, and the pace of progress is prompting investors to reassess how the next decade of physical automation will unfold. ALM Ventures has launched a new US$100 million early-stage fund aimed squarely at this moment—one where advances in robot control, embodied AI and spatial intelligence are beginning to converge into something commercially meaningful.

ALM Ventures Fund I, is designed for the earliest stages of company formation, targeting seed and pre-seed teams building the foundations of humanoid deployment. It’s a concentrated fund that seeks to take early ownership in a sector that many now consider the next major technological frontier.

For Founder and General Partner Modar Alaoui, the timing is not accidental. “After years of research, humanoids are finally entering a phase where performance, reliability and cost are converging toward commercial viability”, he said. “What the category needs now is focused capital and deep technical diligence to turn prototypes into scalable, enduring companies”.

That framing captures a shift happening across robotics: the field is moving out of the lab and into early commercial readiness. Improvements in perception systems, model-based reasoning and motion control are accelerating the transition. Advances in simulation are also lowering the complexity and cost of integrating humanoid platforms into real environments. As these systems become more capable, the gap between research prototypes and market-ready products is narrowing.

ALM Ventures is positioning itself at this inflection point. Fund I’s thesis centers on the core technologies required to scale humanoids safely and economically. This includes next-generation robot platforms, spatial reasoning engines, embodied intelligence models, world-modeling systems and the infrastructure needed for early deployment. Rather than chasing every robotics trend, the fund is concentrating on the essential layers that will determine whether humanoids can work reliably outside controlled settings.

The firm isn’t starting from zero. During the fund’s formation, ALM Ventures made ten early investments that directly align with its investment focus. The portfolio includes companies building at different layers of the humanoid stack, such as Sanctuary AI, Weave Robotics, Emancro, High Torque Robotics, MicroFactory, Mbodi, Adamo, Haptica Robotics, UMA and O-ID. The list reflects a broad but intentional spread, from hardware to intelligence to manufacturing approaches, all oriented toward enabling scalable physical AI.

Beyond capital, ALM Ventures has been shaping the ecosystem through its global Humanoids Summit series in Silicon Valley, London and Tokyo. The series gives the firm early visibility into emerging technologies, pre-incorporation teams and the senior leaders steering the global robotics landscape. That vantage point has helped the firm identify where commercialization is truly taking root and where bottlenecks still exist.

The rise of humanoids is often compared to the early days of self-driving cars: a long arc of research suddenly meeting an acceleration point. What separates this moment is that advances in embodied AI and spatial intelligence are giving robots a more intuitive understanding of the physical world, making them easier to deploy, teach and scale. ALM Ventures’ Fund I is an attempt to capture that transition while shaping the companies that could define the next technological era.

With US$100 million dedicated to the earliest builders in the space, ALM Ventures is signaling its belief that humanoids are not just another robotics cycle—they may be the next major platform shift in AI.

Rethinking 3D modelling for a world that generates too much, too quickly.

MicroCloud Hologram Inc. (NASDAQ: HOLO), a technology service provider recognized for its holography and imaging systems, is now expanding into a more advanced realm: a quantum-driven 3D intelligent model. The goal is to generate detailed 3D models and images with far less manual effort — a need that has only grown as industries flood the world with more visual data every year.

The concept is straightforward, even if the technology behind it isn’t. Traditional 3D modeling workflows are slow, fragmented and depend on large teams to clean datasets, train models, adjust parameters and fine-tune every output. HOLO is trying to close that gap by combining quantum computing with AI-powered 3D modeling, enabling the system to process massive datasets quickly and automatically produce high-precision 3D assets with much less human involvement.

To achieve this, the company developed a distributed architecture comprising of several specialized subsystems. One subsystem collects and cleans raw visual data from different sources. Another uses quantum deep learning to understand patterns in that data. A third converts the trained model into ready-to-use 3D assets based on user inputs. Additional modules manage visualization, secure data storage and system-wide protection — all supported by quantum-level encryption. Each subsystem runs in its own container and communicates through encrypted interfaces, allowing flexible upgrades and scaling without disrupting the entire system.

Why this matters: Industries ranging from gaming and film to manufacturing, simulation and digital twins are rapidly increasing their reliance on 3D content. The real bottleneck isn’t creativity — it’s time. Producing accurate, high-quality 3D assets still requires a huge amount of manual processing. HOLO’s approach attempts to lighten that workload by utilizing quantum tools to speed up data processing, model training, generation and scaling, while keeping user data secure.

According to the company, the system’s biggest advantages include its ability to handle massive datasets more efficiently, generate precise 3D models with fewer manual steps, and scale easily thanks to its modular, quantum-optimized design. Whether quantum computing will become a mainstream part of 3D production remains an open question. Still, the model shows how companies are beginning to rethink traditional 3D workflows as demand for high-quality digital content continues to surge.

Where smarter storage meets smarter logistics.

E-commerce keeps growing and with it, the number of products moving through warehouses every day. Items vary more than ever — different shapes, seasonal packaging, limited editions and constantly updated designs. At the same time, many logistics centers are dealing with labour shortages and rising pressure to automate.

But today’s image-recognition AI isn’t built for this level of change. Most systems rely on deep-learning models that need to be adjusted or retrained whenever new products appear. Every update — whether it’s a new item or a packaging change — adds extra time, energy use and operational cost. And for warehouses handling huge product catalogs, these retraining cycles can slow everything down.

KIOXIA, a company known for its memory and storage technologies, is working on a different approach. In a new collaboration with Tsubakimoto Chain and EAGLYS, the team has developed an AI-based image recognition system that is designed to adapt more easily as product lines grow and shift. The idea is to help logistics sites automatically identify items moving through their workflows without constantly reworking the core AI model.

At the center of the system is KIOXIA’s AiSAQ software paired with its Memory-Centric AI technology. Instead of retraining the model each time new products appear, the system stores new product data — images, labels and feature information — directly in high-capacity storage. This allows warehouses to add new items quickly without altering the original AI model.

Because storing more data can lead to longer search times, the system also indexes the stored product information and transfers the index into SSD storage. This makes it easier for the AI to retrieve relevant features fast, using a Retrieval-Augmented Generation–style method adapted for image recognition.

The collaboration will be showcased at the 2025 International Robot Exhibition in Tokyo. Visitors will see the system classify items in real time as they move along a conveyor, drawing on stored product features to identify them instantly. The demonstration aims to illustrate how logistics sites can handle continuously changing inventories with greater accuracy and reduced friction.

Overall, as logistics networks become increasingly busy and product lines evolve faster than ever, this memory-driven approach provides a practical way to keep automation adaptable and less fragile.

Brains, bots and the future: Who’s really in control?

When British-Canadian cognitive psychologist and computer scientist Geoffrey Hinton joked that his ex-girlfriend once used ChatGPT to help her break up with him, he wasn’t exaggerating. The father of deep learning was pointing to something stranger: how machines built to mimic language have begun to mimic thought — and how even their creators no longer agree on what that means.

In that one quip — part humor, part unease — Hinton captured the paradox at the center of the world’s most important scientific divide. Artificial intelligence has moved beyond code and circuits into the realm of psychology, economics and even philosophy. Yet among those who know it best, the question has turned unexpectedly existential: what, if anything, do large language models truly understand?

Across the world’s AI labs, that question has split the community into two camps — believers and skeptics, prophets and heretics. One side sees systems like ChatGPT, Claude, and Gemini as the dawn of a new cognitive age. The other insists they’re clever parrots with no grasp of meaning, destined to plateau as soon as the data runs out. Between them stands a trillion-dollar industry built on both conviction and uncertainty.

Hinton, who spent a decade at Google refining the very neural networks that now power generative AI, has lately sounded like a man haunted by his own invention. Speaking to Scott Pelley on the CBS 60 Minutes interview aired October 8, 2023, Hinton said, “I think we're moving into a period when for the first time ever we may have things more intelligent than us.” . He said it not with triumph, but with visible worry.

Yoshua Bengio, his longtime collaborator, sees it differently. Speaking at the All In conference in Montreal, he told TIME that future AI systems "will have stronger and stronger reasoning abilities, more and more knowledge," while cautioning about ensuring they "act according to our norms". And then there’s Gary Marcus, the cognitive scientist and enduring critic, who dismisses the hype outright: “These systems don’t understand the world. They just predict the next word.”

It’s a rare moment in science when three pioneers of the same field disagree so completely — not about ethics or funding, but about the very nature of progress. And yet that disagreement now shapes how the future of AI will unfold.

In the span of just two years, large language models have gone from research curiosities to corporate cornerstones. Banks use them to summarize reports. Lawyers draft contracts with them. Pharmaceutical firms explore protein structures through them. Silicon Valley is betting that scaling these models — training them on ever-larger datasets with ever-denser computers — will eventually yield something approaching reasoning, maybe even intelligence.

It’s the “bigger is smarter” philosophy, and it has worked — so far. OpenAI’s GPT-4, Anthropic’s Claude, and Google’s Gemini have grown exponentially in capability . They can write code, explain math, outline business plans, even simulate empathy. For most users, the line between prediction and understanding has already blurred beyond meaning. Kelvin So, who is now conducting AI research in PolyU SPEED, commented , “AI scientists today are inclined to believe we have learnt a bitter lesson in the advancement from the traditional AI to the current LLM paradigm. That said, scaling law, instead of human-crafted complicated rules, is the ultimate law governing AI.”

But inside the labs, cracks are showing. Scaling models have become staggeringly expensive, and the returns are diminishing. A growing number of researchers suspect that raw scale alone cannot unlock true comprehension — that these systems are learning syntax, not semantics; imitation, not insight.

That belief fuels a quiet counter-revolution. Instead of simply piling on data and GPUs, some researchers are pursuing hybrid intelligence — systems that combine statistical learning with symbolic reasoning, causal inference, or embodied interaction with the physical world. The idea is that intelligence requires grounding — an understanding of cause, consequence, and context that no amount of text prediction can supply.

Yet the results speak for themselves. In practice, language models are already transforming industries faster than regulation can keep up. Marketing departments run on them. Customer support, logistics and finance teams depend on them. Even scientists now use them to generate hypotheses, debug code and summarize literature. For every cautionary voice, there are a dozen entrepreneurs who see this technology as a force reshaping every industry. That gap — between what these models actually are and what we hope they might become — defines this moment. It’s a time of awe and unease, where progress races ahead even as understanding lags behind.

Part of the confusion stems from how these systems work. A large language model doesn’t store facts like a database. It predicts what word is most likely to come next in a sequence, based on patterns in vast amounts of text. Behind this seemingly simple prediction mechanism lies a sophisticated architecture. The tokenizer is one of the key innovations behind modern language models. It takes text and chops it into smaller, manageable pieces the AI can understand. These pieces are then turned into numbers, giving the model a way to “read” human language. By doing this, the system can spot context and relationships between words — the building blocks of comprehension.

Inside the model, mechanisms such as multi-head attention enable the system to examine many aspects of information simultaneously, much as a human reader might track several storylines at once.

Reinforcement learning, pioneered by Richard Sutton, a professor of computing science at the University of Alberta, and Andrew Barto, Professor Emeritus at the University of Massachusetts, mimics human trial-and-error learning. The AI develops “value functions” that predict the long-term rewards of its actions. Together, these technologies enable machines to recognize patterns, make predictions and generate text that feels strikingly human — yet beneath this technical progress lies the very divide that cuts to the heart of how intelligence itself is defined.

This placement works well because it elaborates on the technical foundations after the article introduces the basic concept of how language models work, and before it transitions to discussing the emergent behaviors and the “black box problem.”

Yet at scale, that simple process begins to yield emergent behavior — reasoning, problem-solving, even flashes of creativity that surprise their creators. The result is something that looks, sounds and increasingly acts intelligent — even if no one can explain exactly why.

That opacity worries not just philosophers, but engineers. The “black box problem” — our inability to interpret how neural networks make decisions — has turned into a scientific and safety concern. If we can’t explain a model’s reasoning, can we trust it in critical systems like healthcare or defense?

Companies like Anthropic are trying to address that with “constitutional AI,” embedding human-written principles into model training to guide behavior. Others, like OpenAI, are experimenting with internal oversight teams and adversarial testing to catch dangerous or misleading outputs. But no approach yet offers real transparency. We’re effectively steering a ship whose navigation system we don’t fully understand. “We need governance frameworks that evolve as quickly as AI itself,” says Felix Cheung, Founding Chairman of RegTech Association of Hong Kong (RTAHK). “Technical safeguards alone aren't enough — transparent monitoring and clear accountability must become industry standards.”

Meanwhile, the commercial race is accelerating. Venture capital is flowing into AI startups at record speed. OpenAI’s valuation reportedly exceeds US$150 billion; Anthropic, backed by Amazon and Google, isn’t far behind. The bet is simple: that generative AI will become as indispensable to modern life as the internet itself.

And yet, not everyone is buying into that vision. The open-source movement — championed by players like Meta’s Llama, Mistral in France, and a fast-growing constellation of independent labs — argues that democratizing access is the only way to ensure both innovation and accountability. If powerful AI remains locked behind corporate walls, they warn, progress will narrow to the priorities of a few firms.

But openness cuts both ways. Publicly available models are harder to police, and their misuse — from disinformation to deepfakes — grows as easily as innovation does. Regulators are scrambling to balance risk and reward. The European Union’s AI Act is the world’s most comprehensive attempt at governance, but even it struggles to define where to draw the line between creativity and control.

This isn’t just a scientific argument anymore. It’s a geopolitical one. The United States, China, and Europe are each pursuing distinct AI strategies: Washington betting on private-sector dominance, Beijing on state-led scaling, Brussels on regulation and ethics. Behind the headlines, compute power is becoming a form of soft power. Whoever controls access to the chips, data, and infrastructure that fuel AI will control much of the digital economy.

That reality is forcing some uncomfortable math. Training frontier models already consumes energy on the scale of small nations. Data centers now rise next to hydroelectric dams and nuclear plants. Efficiency — once a technical concern — has become an economic and environmental one. As demand grows, so does the incentive to build smaller, smarter, more efficient systems. The industry’s next leap may not come from scale at all, but from constraint.

For all the noise, one truth keeps resurfacing: large language models are tools, not oracles. Their intelligence — if we can call it that — is borrowed from ours. They are trained on human text, human logic, human error. Every time a model surprises us with insight, it is, in a sense, holding up a mirror to collective intelligence.

That’s what makes this schism so fascinating. It’s not really about machines. It’s about what we believe intelligence is — pattern or principle, simulation or soul. For believers like Bengio, intelligence may simply be prediction done right. For critics like Marcus, that’s a category mistake: true understanding requires grounding in the real world, something no model trained on text can ever achieve.

The public, meanwhile, is less interested in metaphysics. To most users, these systems work — and that’s enough. They write emails, plan trips, debug spreadsheets, summarize meetings. Whether they “understand” or not feels academic. But for the scientists, that distinction remains critical, because it determines where AI might ultimately lead.

Even inside the companies building them, that tension shows OpenAI’s Sam Altman has hinted that scaling can’t continue forever. At some point, new architectures — possibly combining logic, memory, or embodied data — will be needed. DeepMind’s Demis Hassabis says something similar: intelligence, he argues, will come not just from prediction, but from interaction with the world.

It’s possible both are right. The future of AI may belong to hybrid systems — part statistical, part symbolic — that can reason across multiple modes of information: text, image, sound, action. The line between model and agent is already blurring, as LLMs gain the ability to browse the web, run code, and call external tools. The next generation won’t just answer questions; it will perform tasks.

For startups, the opportunity — and the risk — lies in that transition. The most valuable companies in this new era may not be those that build the biggest models, but those that build useful ones: specialized systems tuned for medicine, law, logistics, or finance, where reliability matters more than raw capability. The winners will understand that scale is a means, not an end.

And for society, the challenge is to decide what kind of intelligence we want to live with. If we treat these models as collaborators — imperfect, explainable, constrained — they could amplify human potential on a scale unseen since the printing press. If we chase the illusion of autonomy, they could just as easily entrench bias, confusion, and dependency.

The debate over large language models will not end in a lab. It will play out in courts, classrooms, boardrooms, and living rooms — anywhere humans and machines learn to share the same cognitive space. Whether we call that cooperation or competition will depend on how we design, deploy, and, ultimately, define these tools.

Perhaps Hinton’s offhand remark about being psychoanalyzed by his own creation wasn’t just a joke. It was an omen. AI is no longer something we use; it’s something we’re reflected in. Every model trained on our words becomes a record of who we are — our reasoning, our prejudices, our brilliance, our contradictions. The schism among scientists mirrors the one within ourselves: fascination colliding with fear, ambition tempered by doubt.

In the end, the question isn’t whether LLMs are the future. It’s whether we are ready for a future built in their image.