A new AI model replaces months of simulation with near-instant predictions, changing how spacecraft operations are prepared

Updated

April 24, 2026 10:53 AM

Northrop Grumman Stargaze serves as the mother ship for the Pegasus, an air-launched orbital rocket. PHOTO: UNSPLASH

Flexcompute, a startup that builds software to simulate real-world physics, is working with Northrop Grumman to change how space missions are prepared. Together, they have developed an AI-based system that can predict how spacecraft respond during critical manoeuvres such as docking—when one spacecraft moves in and connects with another in orbit. These steps have traditionally taken months of preparation.

At the centre of this work is a long-standing problem in space operations. When a spacecraft fires its thrusters, the exhaust plume interacts with nearby surfaces. These interactions can affect movement, temperature and stability. Because these effects are difficult to test in real conditions, engineers have relied on large volumes of computer simulations to estimate outcomes before a mission. That process is slow and resource-intensive.

The new system replaces much of that workflow with a trained AI model. Instead of running millions of simulations, the model learns patterns from physics-based data and can make predictions in seconds. It also provides a measure of uncertainty, which helps engineers understand how reliable those predictions are when making decisions.

"At Northrop Grumman, we're pioneering physics AI to accelerate design and solve complex simulation and modelling problems like plume impingement—critical for station keeping, rendezvous and space robotics. Simply put: we're pushing the boundaries of advanced space operations", said Fahad Khan, Director of AI Foundations at Northrop Grumman. "Partnering with Flexcompute and NVIDIA, we're accelerating innovation and mission timelines to deliver superior space capabilities for customers at the speed they need".

The system is built using technology from NVIDIA, which provides the computing framework behind the model. Flexcompute has adapted it to handle the specific challenges of spaceflight, including how gases expand and interact in a vacuum. The result is a tool that can simulate complex scenarios much faster while maintaining the level of accuracy needed for mission planning.

By shortening preparation time, the model changes how engineers approach spacecraft design and operations. Faster predictions mean teams can test more scenarios and adjust plans more quickly. It also helps improve fuel use and extend the lifespan of spacecraft.

"Northrop Grumman's confidence reflects what sets Flexcompute apart", said Vera Yang, President and Co-Founder of Flexcompute. "We are able to take the most accurate and scalable physics foundations and evolve them into highly trained, customized Physics AI solutions that engineers can rely on. This work shows how we are transforming the role of simulation, not just speeding it up, but expanding what engineers can confidently solve and how quickly they can act".

The collaboration points to a broader shift in how engineering problems are being handled. Instead of relying only on detailed simulations that take time to run, companies are beginning to use AI systems that can approximate those results quickly while still reflecting the underlying physics.

"The industry's most ambitious space missions now demand a level of speed and precision that traditional engineering cycles can no longer sustain", said Tim Costa, vice president and general manager of computational engineering at NVIDIA. "By integrating NVIDIA PhysicsNeMo, Northrop Grumman and Flexcompute are transforming complex simulations like plume impingement from days of compute into seconds of insight, drastically accelerating the path from mission concept to orbit".

What emerges from this work is a shift in how missions are prepared. When prediction cycles move from months to seconds, testing and decision-making can happen faster. For space operations, where timing and precision are closely linked, that change could reshape how systems are built and run.

Keep Reading

A new safety layer aims to help robots sense people in real time without slowing production

Updated

March 17, 2026 1:02 AM

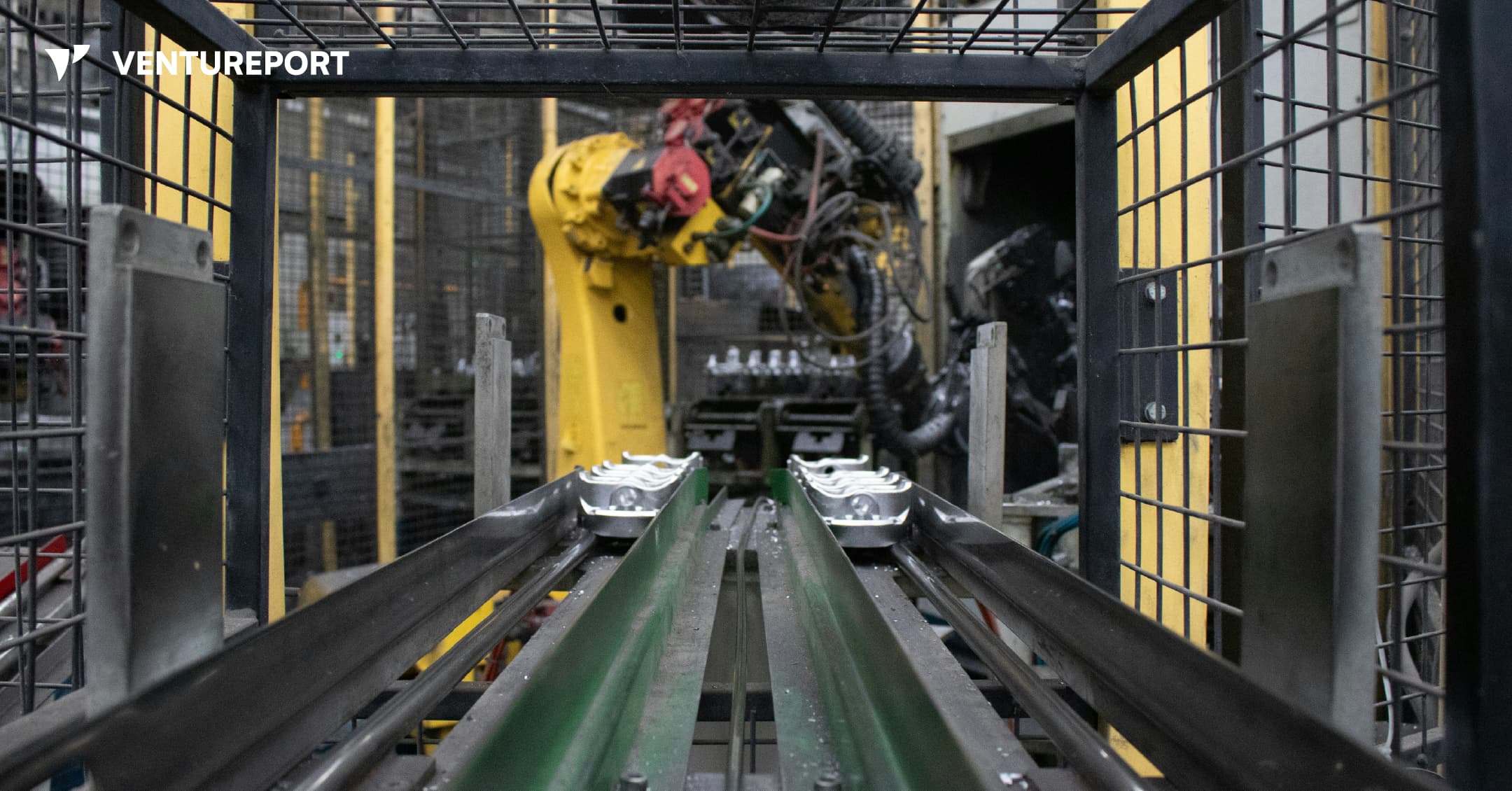

An industrial robot in a factory. PHOTO: UNSPLASH

Algorized has raised US$13 million in a Series A round to advance its AI-powered safety and sensing technology for factories and warehouses. The California- and Switzerland-based robotics startup says the funding will help expand a system designed to transform how robots interact with people. The round was led by Run Ventures, with participation from the Amazon Industrial Innovation Fund and Acrobator Ventures, alongside continued backing from existing investors.

At its core, Algorized is building what it calls an intelligence layer for “physical AI” — industrial robots and autonomous machines that function in real-world settings such as factories and warehouses. While generative AI has transformed software and digital workflows, bringing AI into physical environments presents a different challenge. In these settings, machines must not only complete tasks efficiently but also move safely around human workers.

This is where a clear gap exists. Today, most industrial robots rely on camera-based monitoring systems or predefined safety zones. For instance, when a worker steps into a marked area near a robotic arm, the system is programmed to slow down or stop the machine completely. This approach reduces the risk of accidents. However, it also means production lines can pause frequently, even when there is no immediate danger. In high-speed manufacturing environments, those repeated slowdowns can add up to significant productivity losses.

Algorized’s technology is designed to reduce that trade-off between safety and efficiency. Instead of relying solely on cameras, the company utilizes wireless signals — including Ultra-Wideband (UWB), mmWave, and Wi-Fi — to detect movement and human presence. By analysing small changes in these radio signals, the system can detect motion and breathing patterns in a space. This helps machines determine where people are and how they are moving, even in conditions where cameras may struggle, such as poor lighting, dust or visual obstruction.

Importantly, this data is processed locally at the facility itself — not sent to a remote cloud server for analysis. In practical terms, this means decisions are made on-site, within milliseconds. Reducing this delay, or latency, allows robots to adjust their movements immediately instead of defaulting to a full stop. The aim is to create machines that can respond smoothly and continuously, rather than reacting in a binary stop-or-go manner.

With the new funding, Algorized plans to scale commercial deployments of its platform, known as the Predictive Safety Engine. The company will also invest in refining its intent-recognition models, which are designed to anticipate how humans are likely to move within a workspace. In parallel, it intends to expand its engineering and support teams across Europe and the United States. These efforts build on earlier public demonstrations and ongoing collaborations with manufacturing partners, particularly in the automotive and industrial sectors.

For investors, the appeal goes beyond safety compliance. As factories become more automated, even small improvements in uptime and workflow continuity can translate into meaningful financial gains. Because Algorized’s system works with existing wireless infrastructure, manufacturers may be able to upgrade machine awareness without overhauling their entire hardware setup.

More broadly, the company is addressing a structural limitation in industrial automation. Robotics has advanced rapidly in precision and power, yet human-robot collaboration is still governed by rigid safety systems that prioritise stopping over adapting. By combining wireless sensing with edge-based AI models, Algorized is attempting to give machines a more continuous awareness of their surroundings from the start.